anchor

commited on

add vae, lcm, t2i model path

Browse files- lcm/lcm-lora-sdv1-5/.gitattributes +35 -0

- lcm/lcm-lora-sdv1-5/README.md +217 -0

- lcm/lcm-lora-sdv1-5/image.png +0 -0

- lcm/lcm-lora-sdv1-5/pytorch_lora_weights.safetensors +3 -0

- t2i/sd1.5/majicmixRealv6Fp16/feature_extractor/preprocessor_config.json +28 -0

- t2i/sd1.5/majicmixRealv6Fp16/model_index.json +29 -0

- t2i/sd1.5/majicmixRealv6Fp16/model_index.json.bk +33 -0

- t2i/sd1.5/majicmixRealv6Fp16/scheduler/scheduler_config.json +18 -0

- t2i/sd1.5/majicmixRealv6Fp16/text_encoder/config.json +25 -0

- t2i/sd1.5/majicmixRealv6Fp16/text_encoder/pytorch_model.bin +3 -0

- t2i/sd1.5/majicmixRealv6Fp16/tokenizer/merges.txt +0 -0

- t2i/sd1.5/majicmixRealv6Fp16/tokenizer/special_tokens_map.json +24 -0

- t2i/sd1.5/majicmixRealv6Fp16/tokenizer/tokenizer_config.json +34 -0

- t2i/sd1.5/majicmixRealv6Fp16/tokenizer/vocab.json +0 -0

- t2i/sd1.5/majicmixRealv6Fp16/unet/config.json +60 -0

- t2i/sd1.5/majicmixRealv6Fp16/unet/diffusion_pytorch_model.bin +3 -0

- t2i/sd1.5/majicmixRealv6Fp16/vae/config.json +30 -0

- t2i/sd1.5/majicmixRealv6Fp16/vae/diffusion_pytorch_model.bin +3 -0

- vae/sd-vae-ft-mse/.gitattributes +33 -0

- vae/sd-vae-ft-mse/README.md +83 -0

- vae/sd-vae-ft-mse/config.json +29 -0

- vae/sd-vae-ft-mse/diffusion_pytorch_model.bin +3 -0

- vae/sd-vae-ft-mse/diffusion_pytorch_model.safetensors +3 -0

lcm/lcm-lora-sdv1-5/.gitattributes

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

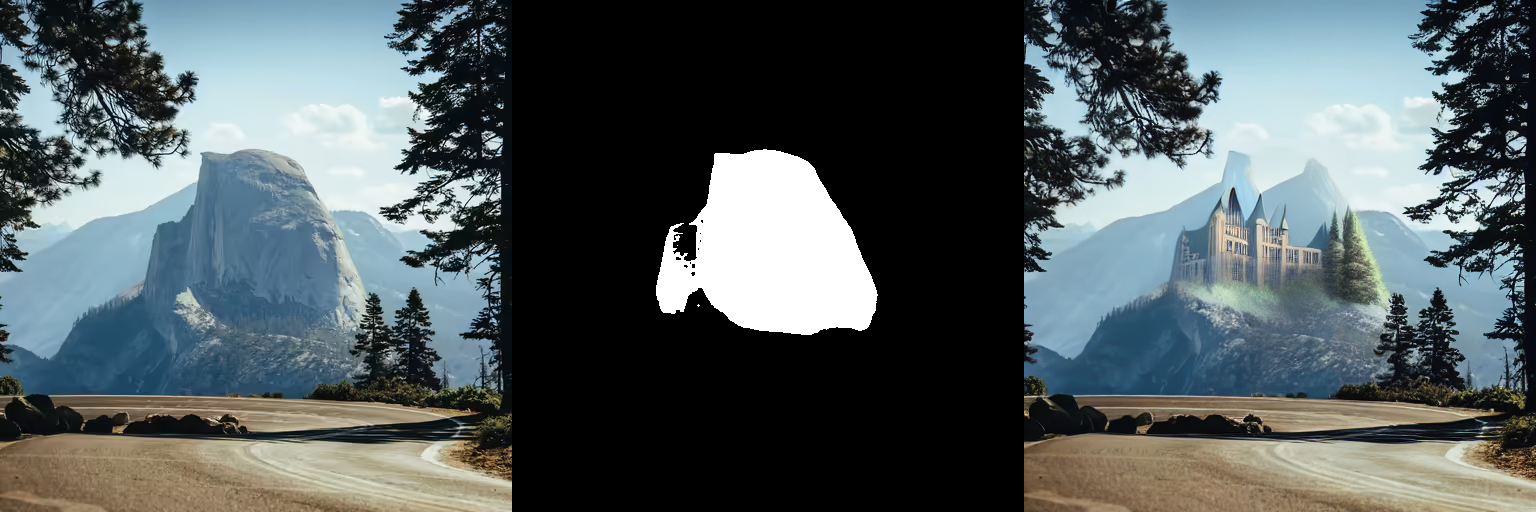

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

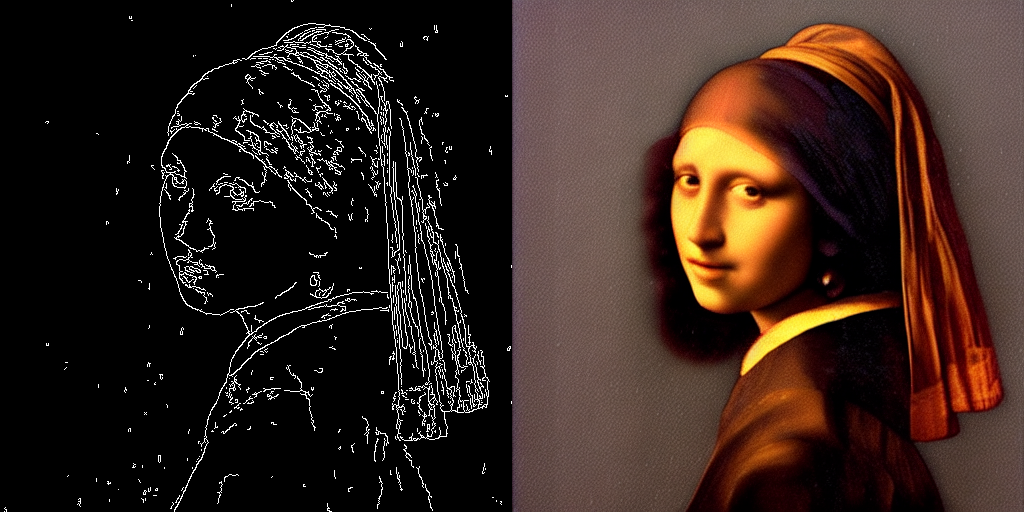

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

lcm/lcm-lora-sdv1-5/README.md

ADDED

|

@@ -0,0 +1,217 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: diffusers

|

| 3 |

+

base_model: runwayml/stable-diffusion-v1-5

|

| 4 |

+

tags:

|

| 5 |

+

- lora

|

| 6 |

+

- text-to-image

|

| 7 |

+

license: openrail++

|

| 8 |

+

inference: false

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

# Latent Consistency Model (LCM) LoRA: SDv1-5

|

| 12 |

+

|

| 13 |

+

Latent Consistency Model (LCM) LoRA was proposed in [LCM-LoRA: A universal Stable-Diffusion Acceleration Module](https://arxiv.org/abs/2311.05556)

|

| 14 |

+

by *Simian Luo, Yiqin Tan, Suraj Patil, Daniel Gu et al.*

|

| 15 |

+

|

| 16 |

+

It is a distilled consistency adapter for [`runwayml/stable-diffusion-v1-5`](https://huggingface.co/runwayml/stable-diffusion-v1-5) that allows

|

| 17 |

+

to reduce the number of inference steps to only between **2 - 8 steps**.

|

| 18 |

+

|

| 19 |

+

| Model | Params / M |

|

| 20 |

+

|----------------------------------------------------------------------------|------------|

|

| 21 |

+

| [**lcm-lora-sdv1-5**](https://huggingface.co/latent-consistency/lcm-lora-sdv1-5) | **67.5** |

|

| 22 |

+

| [lcm-lora-ssd-1b](https://huggingface.co/latent-consistency/lcm-lora-ssd-1b) | 105 |

|

| 23 |

+

| [lcm-lora-sdxl](https://huggingface.co/latent-consistency/lcm-lora-sdxl) | 197M |

|

| 24 |

+

|

| 25 |

+

## Usage

|

| 26 |

+

|

| 27 |

+

LCM-LoRA is supported in 🤗 Hugging Face Diffusers library from version v0.23.0 onwards. To run the model, first

|

| 28 |

+

install the latest version of the Diffusers library as well as `peft`, `accelerate` and `transformers`.

|

| 29 |

+

audio dataset from the Hugging Face Hub:

|

| 30 |

+

|

| 31 |

+

```bash

|

| 32 |

+

pip install --upgrade pip

|

| 33 |

+

pip install --upgrade diffusers transformers accelerate peft

|

| 34 |

+

```

|

| 35 |

+

|

| 36 |

+

***Note: For detailed usage examples we recommend you to check out our official [LCM-LoRA docs](https://huggingface.co/docs/diffusers/main/en/using-diffusers/inference_with_lcm_lora)***

|

| 37 |

+

|

| 38 |

+

### Text-to-Image

|

| 39 |

+

|

| 40 |

+

The adapter can be loaded with SDv1-5 or deviratives. Here we use [`Lykon/dreamshaper-7`](https://huggingface.co/Lykon/dreamshaper-7). Next, the scheduler needs to be changed to [`LCMScheduler`](https://huggingface.co/docs/diffusers/v0.22.3/en/api/schedulers/lcm#diffusers.LCMScheduler) and we can reduce the number of inference steps to just 2 to 8 steps.

|

| 41 |

+

Please make sure to either disable `guidance_scale` or use values between 1.0 and 2.0.

|

| 42 |

+

|

| 43 |

+

```python

|

| 44 |

+

import torch

|

| 45 |

+

from diffusers import LCMScheduler, AutoPipelineForText2Image

|

| 46 |

+

|

| 47 |

+

model_id = "Lykon/dreamshaper-7"

|

| 48 |

+

adapter_id = "latent-consistency/lcm-lora-sdv1-5"

|

| 49 |

+

|

| 50 |

+

pipe = AutoPipelineForText2Image.from_pretrained(model_id, torch_dtype=torch.float16, variant="fp16")

|

| 51 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 52 |

+

pipe.to("cuda")

|

| 53 |

+

|

| 54 |

+

# load and fuse lcm lora

|

| 55 |

+

pipe.load_lora_weights(adapter_id)

|

| 56 |

+

pipe.fuse_lora()

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

prompt = "Self-portrait oil painting, a beautiful cyborg with golden hair, 8k"

|

| 60 |

+

|

| 61 |

+

# disable guidance_scale by passing 0

|

| 62 |

+

image = pipe(prompt=prompt, num_inference_steps=4, guidance_scale=0).images[0]

|

| 63 |

+

```

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

### Image-to-Image

|

| 68 |

+

|

| 69 |

+

LCM-LoRA can be applied to image-to-image tasks too. Let's look at how we can perform image-to-image generation with LCMs. For this example we'll use the [dreamshaper-7](https://huggingface.co/Lykon/dreamshaper-7) model and the LCM-LoRA for `stable-diffusion-v1-5 `.

|

| 70 |

+

|

| 71 |

+

```python

|

| 72 |

+

import torch

|

| 73 |

+

from diffusers import AutoPipelineForImage2Image, LCMScheduler

|

| 74 |

+

from diffusers.utils import make_image_grid, load_image

|

| 75 |

+

|

| 76 |

+

pipe = AutoPipelineForImage2Image.from_pretrained(

|

| 77 |

+

"Lykon/dreamshaper-7",

|

| 78 |

+

torch_dtype=torch.float16,

|

| 79 |

+

variant="fp16",

|

| 80 |

+

).to("cuda")

|

| 81 |

+

|

| 82 |

+

# set scheduler

|

| 83 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 84 |

+

|

| 85 |

+

# load LCM-LoRA

|

| 86 |

+

pipe.load_lora_weights("latent-consistency/lcm-lora-sdv1-5")

|

| 87 |

+

pipe.fuse_lora()

|

| 88 |

+

|

| 89 |

+

# prepare image

|

| 90 |

+

url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/img2img-init.png"

|

| 91 |

+

init_image = load_image(url)

|

| 92 |

+

prompt = "Astronauts in a jungle, cold color palette, muted colors, detailed, 8k"

|

| 93 |

+

|

| 94 |

+

# pass prompt and image to pipeline

|

| 95 |

+

generator = torch.manual_seed(0)

|

| 96 |

+

image = pipe(

|

| 97 |

+

prompt,

|

| 98 |

+

image=init_image,

|

| 99 |

+

num_inference_steps=4,

|

| 100 |

+

guidance_scale=1,

|

| 101 |

+

strength=0.6,

|

| 102 |

+

generator=generator

|

| 103 |

+

).images[0]

|

| 104 |

+

make_image_grid([init_image, image], rows=1, cols=2)

|

| 105 |

+

```

|

| 106 |

+

|

| 107 |

+

|

| 108 |

+

|

| 109 |

+

|

| 110 |

+

### Inpainting

|

| 111 |

+

|

| 112 |

+

LCM-LoRA can be used for inpainting as well.

|

| 113 |

+

|

| 114 |

+

```python

|

| 115 |

+

import torch

|

| 116 |

+

from diffusers import AutoPipelineForInpainting, LCMScheduler

|

| 117 |

+

from diffusers.utils import load_image, make_image_grid

|

| 118 |

+

|

| 119 |

+

pipe = AutoPipelineForInpainting.from_pretrained(

|

| 120 |

+

"runwayml/stable-diffusion-inpainting",

|

| 121 |

+

torch_dtype=torch.float16,

|

| 122 |

+

variant="fp16",

|

| 123 |

+

).to("cuda")

|

| 124 |

+

|

| 125 |

+

# set scheduler

|

| 126 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 127 |

+

|

| 128 |

+

# load LCM-LoRA

|

| 129 |

+

pipe.load_lora_weights("latent-consistency/lcm-lora-sdv1-5")

|

| 130 |

+

pipe.fuse_lora()

|

| 131 |

+

|

| 132 |

+

# load base and mask image

|

| 133 |

+

init_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/inpaint.png")

|

| 134 |

+

mask_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/inpaint_mask.png")

|

| 135 |

+

|

| 136 |

+

# generator = torch.Generator("cuda").manual_seed(92)

|

| 137 |

+

prompt = "concept art digital painting of an elven castle, inspired by lord of the rings, highly detailed, 8k"

|

| 138 |

+

generator = torch.manual_seed(0)

|

| 139 |

+

image = pipe(

|

| 140 |

+

prompt=prompt,

|

| 141 |

+

image=init_image,

|

| 142 |

+

mask_image=mask_image,

|

| 143 |

+

generator=generator,

|

| 144 |

+

num_inference_steps=4,

|

| 145 |

+

guidance_scale=4,

|

| 146 |

+

).images[0]

|

| 147 |

+

make_image_grid([init_image, mask_image, image], rows=1, cols=3)

|

| 148 |

+

```

|

| 149 |

+

|

| 150 |

+

|

| 151 |

+

|

| 152 |

+

|

| 153 |

+

### ControlNet

|

| 154 |

+

|

| 155 |

+

For this example, we'll use the SD-v1-5 model and the LCM-LoRA for SD-v1-5 with canny ControlNet.

|

| 156 |

+

|

| 157 |

+

```python

|

| 158 |

+

import torch

|

| 159 |

+

import cv2

|

| 160 |

+

import numpy as np

|

| 161 |

+

from PIL import Image

|

| 162 |

+

|

| 163 |

+

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel, LCMScheduler

|

| 164 |

+

from diffusers.utils import load_image

|

| 165 |

+

|

| 166 |

+

image = load_image(

|

| 167 |

+

"https://hf.co/datasets/huggingface/documentation-images/resolve/main/diffusers/input_image_vermeer.png"

|

| 168 |

+

).resize((512, 512))

|

| 169 |

+

|

| 170 |

+

image = np.array(image)

|

| 171 |

+

|

| 172 |

+

low_threshold = 100

|

| 173 |

+

high_threshold = 200

|

| 174 |

+

|

| 175 |

+

image = cv2.Canny(image, low_threshold, high_threshold)

|

| 176 |

+

image = image[:, :, None]

|

| 177 |

+

image = np.concatenate([image, image, image], axis=2)

|

| 178 |

+

canny_image = Image.fromarray(image)

|

| 179 |

+

|

| 180 |

+

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-canny", torch_dtype=torch.float16)

|

| 181 |

+

pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

| 182 |

+

"runwayml/stable-diffusion-v1-5",

|

| 183 |

+

controlnet=controlnet,

|

| 184 |

+

torch_dtype=torch.float16,

|

| 185 |

+

safety_checker=None,

|

| 186 |

+

variant="fp16"

|

| 187 |

+

).to("cuda")

|

| 188 |

+

|

| 189 |

+

# set scheduler

|

| 190 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 191 |

+

|

| 192 |

+

# load LCM-LoRA

|

| 193 |

+

pipe.load_lora_weights("latent-consistency/lcm-lora-sdv1-5")

|

| 194 |

+

|

| 195 |

+

generator = torch.manual_seed(0)

|

| 196 |

+

image = pipe(

|

| 197 |

+

"the mona lisa",

|

| 198 |

+

image=canny_image,

|

| 199 |

+

num_inference_steps=4,

|

| 200 |

+

guidance_scale=1.5,

|

| 201 |

+

controlnet_conditioning_scale=0.8,

|

| 202 |

+

cross_attention_kwargs={"scale": 1},

|

| 203 |

+

generator=generator,

|

| 204 |

+

).images[0]

|

| 205 |

+

make_image_grid([canny_image, image], rows=1, cols=2)

|

| 206 |

+

```

|

| 207 |

+

|

| 208 |

+

|

| 209 |

+

|

| 210 |

+

|

| 211 |

+

## Speed Benchmark

|

| 212 |

+

|

| 213 |

+

TODO

|

| 214 |

+

|

| 215 |

+

## Training

|

| 216 |

+

|

| 217 |

+

TODO

|

lcm/lcm-lora-sdv1-5/image.png

ADDED

|

lcm/lcm-lora-sdv1-5/pytorch_lora_weights.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8f90d840e075ff588a58e22c6586e2ae9a6f7922996ee6649a7f01072333afe4

|

| 3 |

+

size 134621556

|

t2i/sd1.5/majicmixRealv6Fp16/feature_extractor/preprocessor_config.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"crop_size": {

|

| 3 |

+

"height": 224,

|

| 4 |

+

"width": 224

|

| 5 |

+

},

|

| 6 |

+

"do_center_crop": true,

|

| 7 |

+

"do_convert_rgb": true,

|

| 8 |

+

"do_normalize": true,

|

| 9 |

+

"do_rescale": true,

|

| 10 |

+

"do_resize": true,

|

| 11 |

+

"feature_extractor_type": "CLIPFeatureExtractor",

|

| 12 |

+

"image_mean": [

|

| 13 |

+

0.48145466,

|

| 14 |

+

0.4578275,

|

| 15 |

+

0.40821073

|

| 16 |

+

],

|

| 17 |

+

"image_processor_type": "CLIPFeatureExtractor",

|

| 18 |

+

"image_std": [

|

| 19 |

+

0.26862954,

|

| 20 |

+

0.26130258,

|

| 21 |

+

0.27577711

|

| 22 |

+

],

|

| 23 |

+

"resample": 3,

|

| 24 |

+

"rescale_factor": 0.00392156862745098,

|

| 25 |

+

"size": {

|

| 26 |

+

"shortest_edge": 224

|

| 27 |

+

}

|

| 28 |

+

}

|

t2i/sd1.5/majicmixRealv6Fp16/model_index.json

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "StableDiffusionPipeline",

|

| 3 |

+

"_diffusers_version": "0.16.0",

|

| 4 |

+

"feature_extractor": [

|

| 5 |

+

"transformers",

|

| 6 |

+

"CLIPFeatureExtractor"

|

| 7 |

+

],

|

| 8 |

+

"requires_safety_checker": false,

|

| 9 |

+

"scheduler": [

|

| 10 |

+

"diffusers",

|

| 11 |

+

"PNDMScheduler"

|

| 12 |

+

],

|

| 13 |

+

"text_encoder": [

|

| 14 |

+

"transformers",

|

| 15 |

+

"CLIPTextModel"

|

| 16 |

+

],

|

| 17 |

+

"tokenizer": [

|

| 18 |

+

"transformers",

|

| 19 |

+

"CLIPTokenizer"

|

| 20 |

+

],

|

| 21 |

+

"unet": [

|

| 22 |

+

"diffusers",

|

| 23 |

+

"UNet2DConditionModel"

|

| 24 |

+

],

|

| 25 |

+

"vae": [

|

| 26 |

+

"diffusers",

|

| 27 |

+

"AutoencoderKL"

|

| 28 |

+

]

|

| 29 |

+

}

|

t2i/sd1.5/majicmixRealv6Fp16/model_index.json.bk

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "StableDiffusionPipeline",

|

| 3 |

+

"_diffusers_version": "0.16.0",

|

| 4 |

+

"feature_extractor": [

|

| 5 |

+

"transformers",

|

| 6 |

+

"CLIPFeatureExtractor"

|

| 7 |

+

],

|

| 8 |

+

"requires_safety_checker": true,

|

| 9 |

+

"safety_checker": [

|

| 10 |

+

"stable_diffusion",

|

| 11 |

+

"StableDiffusionSafetyChecker"

|

| 12 |

+

],

|

| 13 |

+

"scheduler": [

|

| 14 |

+

"diffusers",

|

| 15 |

+

"PNDMScheduler"

|

| 16 |

+

],

|

| 17 |

+

"text_encoder": [

|

| 18 |

+

"transformers",

|

| 19 |

+

"CLIPTextModel"

|

| 20 |

+

],

|

| 21 |

+

"tokenizer": [

|

| 22 |

+

"transformers",

|

| 23 |

+

"CLIPTokenizer"

|

| 24 |

+

],

|

| 25 |

+

"unet": [

|

| 26 |

+

"diffusers",

|

| 27 |

+

"UNet2DConditionModel"

|

| 28 |

+

],

|

| 29 |

+

"vae": [

|

| 30 |

+

"diffusers",

|

| 31 |

+

"AutoencoderKL"

|

| 32 |

+

]

|

| 33 |

+

}

|

t2i/sd1.5/majicmixRealv6Fp16/scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,18 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "PNDMScheduler",

|

| 3 |

+

"_diffusers_version": "0.16.0",

|

| 4 |

+

"beta_end": 0.012,

|

| 5 |

+

"beta_schedule": "scaled_linear",

|

| 6 |

+

"beta_start": 0.00085,

|

| 7 |

+

"clip_sample": false,

|

| 8 |

+

"clip_sample_range": 1.0,

|

| 9 |

+

"dynamic_thresholding_ratio": 0.995,

|

| 10 |

+

"num_train_timesteps": 1000,

|

| 11 |

+

"prediction_type": "epsilon",

|

| 12 |

+

"sample_max_value": 1.0,

|

| 13 |

+

"set_alpha_to_one": false,

|

| 14 |

+

"skip_prk_steps": true,

|

| 15 |

+

"steps_offset": 1,

|

| 16 |

+

"thresholding": false,

|

| 17 |

+

"trained_betas": null

|

| 18 |

+

}

|

t2i/sd1.5/majicmixRealv6Fp16/text_encoder/config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "openai/clip-vit-large-patch14",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPTextModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"dropout": 0.0,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "quick_gelu",

|

| 11 |

+

"hidden_size": 768,

|

| 12 |

+

"initializer_factor": 1.0,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 3072,

|

| 15 |

+

"layer_norm_eps": 1e-05,

|

| 16 |

+

"max_position_embeddings": 77,

|

| 17 |

+

"model_type": "clip_text_model",

|

| 18 |

+

"num_attention_heads": 12,

|

| 19 |

+

"num_hidden_layers": 12,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"projection_dim": 768,

|

| 22 |

+

"torch_dtype": "float16",

|

| 23 |

+

"transformers_version": "4.26.0",

|

| 24 |

+

"vocab_size": 49408

|

| 25 |

+

}

|

t2i/sd1.5/majicmixRealv6Fp16/text_encoder/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5d7ddacad155e3f98b3f3f1bcac51e264977cd8383e5eef5957a80d862c81adc

|

| 3 |

+

size 246186081

|

t2i/sd1.5/majicmixRealv6Fp16/tokenizer/merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

t2i/sd1.5/majicmixRealv6Fp16/tokenizer/special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<|startoftext|>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": true,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "<|endoftext|>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": true,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "<|endoftext|>",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<|endoftext|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": true,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

t2i/sd1.5/majicmixRealv6Fp16/tokenizer/tokenizer_config.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_prefix_space": false,

|

| 3 |

+

"bos_token": {

|

| 4 |

+

"__type": "AddedToken",

|

| 5 |

+

"content": "<|startoftext|>",

|

| 6 |

+

"lstrip": false,

|

| 7 |

+

"normalized": true,

|

| 8 |

+

"rstrip": false,

|

| 9 |

+

"single_word": false

|

| 10 |

+

},

|

| 11 |

+

"do_lower_case": true,

|

| 12 |

+

"eos_token": {

|

| 13 |

+

"__type": "AddedToken",

|

| 14 |

+

"content": "<|endoftext|>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": true,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false

|

| 19 |

+

},

|

| 20 |

+

"errors": "replace",

|

| 21 |

+

"model_max_length": 77,

|

| 22 |

+

"name_or_path": "openai/clip-vit-large-patch14",

|

| 23 |

+

"pad_token": "<|endoftext|>",

|

| 24 |

+

"special_tokens_map_file": "./special_tokens_map.json",

|

| 25 |

+

"tokenizer_class": "CLIPTokenizer",

|

| 26 |

+

"unk_token": {

|

| 27 |

+

"__type": "AddedToken",

|

| 28 |

+

"content": "<|endoftext|>",

|

| 29 |

+

"lstrip": false,

|

| 30 |

+

"normalized": true,

|

| 31 |

+

"rstrip": false,

|

| 32 |

+

"single_word": false

|

| 33 |

+

}

|

| 34 |

+

}

|

t2i/sd1.5/majicmixRealv6Fp16/tokenizer/vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

t2i/sd1.5/majicmixRealv6Fp16/unet/config.json

ADDED

|

@@ -0,0 +1,60 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.16.0",

|

| 4 |

+

"act_fn": "silu",

|

| 5 |

+

"addition_embed_type": null,

|

| 6 |

+

"addition_embed_type_num_heads": 64,

|

| 7 |

+

"attention_head_dim": 8,

|

| 8 |

+

"block_out_channels": [

|

| 9 |

+

320,

|

| 10 |

+

640,

|

| 11 |

+

1280,

|

| 12 |

+

1280

|

| 13 |

+

],

|

| 14 |

+

"center_input_sample": false,

|

| 15 |

+

"class_embed_type": null,

|

| 16 |

+

"class_embeddings_concat": false,

|

| 17 |

+

"conv_in_kernel": 3,

|

| 18 |

+

"conv_out_kernel": 3,

|

| 19 |

+

"cross_attention_dim": 768,

|

| 20 |

+

"cross_attention_norm": null,

|

| 21 |

+

"down_block_types": [

|

| 22 |

+

"CrossAttnDownBlock2D",

|

| 23 |

+

"CrossAttnDownBlock2D",

|

| 24 |

+

"CrossAttnDownBlock2D",

|

| 25 |

+

"DownBlock2D"

|

| 26 |

+

],

|

| 27 |

+

"downsample_padding": 1,

|

| 28 |

+

"dual_cross_attention": false,

|

| 29 |

+

"encoder_hid_dim": null,

|

| 30 |

+

"flip_sin_to_cos": true,

|

| 31 |

+

"freq_shift": 0,

|

| 32 |

+

"in_channels": 4,

|

| 33 |

+

"layers_per_block": 2,

|

| 34 |

+

"mid_block_only_cross_attention": null,

|

| 35 |

+

"mid_block_scale_factor": 1,

|

| 36 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 37 |

+

"norm_eps": 1e-05,

|

| 38 |

+

"norm_num_groups": 32,

|

| 39 |

+

"num_class_embeds": null,

|

| 40 |

+

"only_cross_attention": false,

|

| 41 |

+

"out_channels": 4,

|

| 42 |

+

"projection_class_embeddings_input_dim": null,

|

| 43 |

+

"resnet_out_scale_factor": 1.0,

|

| 44 |

+

"resnet_skip_time_act": false,

|

| 45 |

+

"resnet_time_scale_shift": "default",

|

| 46 |

+

"sample_size": 64,

|

| 47 |

+

"time_cond_proj_dim": null,

|

| 48 |

+

"time_embedding_act_fn": null,

|

| 49 |

+

"time_embedding_dim": null,

|

| 50 |

+

"time_embedding_type": "positional",

|

| 51 |

+

"timestep_post_act": null,

|

| 52 |

+

"up_block_types": [

|

| 53 |

+

"UpBlock2D",

|

| 54 |

+

"CrossAttnUpBlock2D",

|

| 55 |

+

"CrossAttnUpBlock2D",

|

| 56 |

+

"CrossAttnUpBlock2D"

|

| 57 |

+

],

|

| 58 |

+

"upcast_attention": false,

|

| 59 |

+

"use_linear_projection": false

|

| 60 |

+

}

|

t2i/sd1.5/majicmixRealv6Fp16/unet/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:94a829879d9268040377459510ae6ec5af2c8b4515a69936f8cbd6bb8ea286eb

|

| 3 |

+

size 1719324453

|

t2i/sd1.5/majicmixRealv6Fp16/vae/config.json

ADDED

|

@@ -0,0 +1,30 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "AutoencoderKL",

|

| 3 |

+

"_diffusers_version": "0.16.0",

|

| 4 |

+

"act_fn": "silu",

|

| 5 |

+

"block_out_channels": [

|

| 6 |

+

128,

|

| 7 |

+

256,

|

| 8 |

+

512,

|

| 9 |

+

512

|

| 10 |

+

],

|

| 11 |

+

"down_block_types": [

|

| 12 |

+

"DownEncoderBlock2D",

|

| 13 |

+

"DownEncoderBlock2D",

|

| 14 |

+

"DownEncoderBlock2D",

|

| 15 |

+

"DownEncoderBlock2D"

|

| 16 |

+

],

|

| 17 |

+

"in_channels": 3,

|

| 18 |

+

"latent_channels": 4,

|

| 19 |

+

"layers_per_block": 2,

|

| 20 |

+

"norm_num_groups": 32,

|

| 21 |

+

"out_channels": 3,

|

| 22 |

+

"sample_size": 512,

|

| 23 |

+

"scaling_factor": 0.18215,

|

| 24 |

+

"up_block_types": [

|

| 25 |

+

"UpDecoderBlock2D",

|

| 26 |

+

"UpDecoderBlock2D",

|

| 27 |

+

"UpDecoderBlock2D",

|

| 28 |

+

"UpDecoderBlock2D"

|

| 29 |

+

]

|

| 30 |

+

}

|

t2i/sd1.5/majicmixRealv6Fp16/vae/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e74bb1390c97f146afccbbb42e65c1d295d0f1e61f7886a240b8a4e5ad622328

|

| 3 |

+

size 167404145

|

vae/sd-vae-ft-mse/.gitattributes

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

diffusion_pytorch_model.safetensors filter=lfs diff=lfs merge=lfs -text

|

vae/sd-vae-ft-mse/README.md

ADDED

|

@@ -0,0 +1,83 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: mit

|

| 3 |

+

tags:

|

| 4 |

+

- stable-diffusion

|

| 5 |

+

- stable-diffusion-diffusers

|

| 6 |

+

inference: false

|

| 7 |

+

---

|

| 8 |

+

# Improved Autoencoders

|

| 9 |

+

|

| 10 |

+

## Utilizing

|

| 11 |

+

These weights are intended to be used with the [🧨 diffusers library](https://github.com/huggingface/diffusers). If you are looking for the model to use with the original [CompVis Stable Diffusion codebase](https://github.com/CompVis/stable-diffusion), [come here](https://huggingface.co/stabilityai/sd-vae-ft-mse-original).

|

| 12 |

+

|

| 13 |

+

#### How to use with 🧨 diffusers

|

| 14 |

+

You can integrate this fine-tuned VAE decoder to your existing `diffusers` workflows, by including a `vae` argument to the `StableDiffusionPipeline`

|

| 15 |

+

```py

|

| 16 |

+

from diffusers.models import AutoencoderKL

|

| 17 |

+

from diffusers import StableDiffusionPipeline

|

| 18 |

+

|

| 19 |

+

model = "CompVis/stable-diffusion-v1-4"

|

| 20 |

+

vae = AutoencoderKL.from_pretrained("stabilityai/sd-vae-ft-mse")

|

| 21 |

+

pipe = StableDiffusionPipeline.from_pretrained(model, vae=vae)

|

| 22 |

+

```

|

| 23 |

+

|

| 24 |

+

## Decoder Finetuning

|

| 25 |

+

We publish two kl-f8 autoencoder versions, finetuned from the original [kl-f8 autoencoder](https://github.com/CompVis/latent-diffusion#pretrained-autoencoding-models) on a 1:1 ratio of [LAION-Aesthetics](https://laion.ai/blog/laion-aesthetics/) and LAION-Humans, an unreleased subset containing only SFW images of humans. The intent was to fine-tune on the Stable Diffusion training set (the autoencoder was originally trained on OpenImages) but also enrich the dataset with images of humans to improve the reconstruction of faces.

|

| 26 |

+

The first, _ft-EMA_, was resumed from the original checkpoint, trained for 313198 steps and uses EMA weights. It uses the same loss configuration as the original checkpoint (L1 + LPIPS).

|

| 27 |

+

The second, _ft-MSE_, was resumed from _ft-EMA_ and uses EMA weights and was trained for another 280k steps using a different loss, with more emphasis

|

| 28 |

+

on MSE reconstruction (MSE + 0.1 * LPIPS). It produces somewhat ``smoother'' outputs. The batch size for both versions was 192 (16 A100s, batch size 12 per GPU).

|

| 29 |

+

To keep compatibility with existing models, only the decoder part was finetuned; the checkpoints can be used as a drop-in replacement for the existing autoencoder.

|

| 30 |

+

|

| 31 |

+

_Original kl-f8 VAE vs f8-ft-EMA vs f8-ft-MSE_

|

| 32 |

+

|

| 33 |

+

## Evaluation

|

| 34 |

+

### COCO 2017 (256x256, val, 5000 images)

|

| 35 |

+

| Model | train steps | rFID | PSNR | SSIM | PSIM | Link | Comments

|

| 36 |

+

|----------|---------|------|--------------|---------------|---------------|-----------------------------------------------------------------------------------|-------------------------------------------------------------------------------------------------|

|

| 37 |

+

| | | | | | | | |

|

| 38 |

+

| original | 246803 | 4.99 | 23.4 +/- 3.8 | 0.69 +/- 0.14 | 1.01 +/- 0.28 | https://ommer-lab.com/files/latent-diffusion/kl-f8.zip | as used in SD |

|

| 39 |

+

| ft-EMA | 560001 | 4.42 | 23.8 +/- 3.9 | 0.69 +/- 0.13 | 0.96 +/- 0.27 | https://huggingface.co/stabilityai/sd-vae-ft-ema-original/resolve/main/vae-ft-ema-560000-ema-pruned.ckpt | slightly better overall, with EMA |

|

| 40 |

+

| ft-MSE | 840001 | 4.70 | 24.5 +/- 3.7 | 0.71 +/- 0.13 | 0.92 +/- 0.27 | https://huggingface.co/stabilityai/sd-vae-ft-mse-original/resolve/main/vae-ft-mse-840000-ema-pruned.ckpt | resumed with EMA from ft-EMA, emphasis on MSE (rec. loss = MSE + 0.1 * LPIPS), smoother outputs |

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

### LAION-Aesthetics 5+ (256x256, subset, 10000 images)

|

| 44 |

+

| Model | train steps | rFID | PSNR | SSIM | PSIM | Link | Comments

|

| 45 |

+

|----------|-----------|------|--------------|---------------|---------------|-----------------------------------------------------------------------------------|-------------------------------------------------------------------------------------------------|

|

| 46 |

+

| | | | | | | | |

|

| 47 |

+

| original | 246803 | 2.61 | 26.0 +/- 4.4 | 0.81 +/- 0.12 | 0.75 +/- 0.36 | https://ommer-lab.com/files/latent-diffusion/kl-f8.zip | as used in SD |

|

| 48 |

+

| ft-EMA | 560001 | 1.77 | 26.7 +/- 4.8 | 0.82 +/- 0.12 | 0.67 +/- 0.34 | https://huggingface.co/stabilityai/sd-vae-ft-ema-original/resolve/main/vae-ft-ema-560000-ema-pruned.ckpt | slightly better overall, with EMA |

|

| 49 |

+

| ft-MSE | 840001 | 1.88 | 27.3 +/- 4.7 | 0.83 +/- 0.11 | 0.65 +/- 0.34 | https://huggingface.co/stabilityai/sd-vae-ft-mse-original/resolve/main/vae-ft-mse-840000-ema-pruned.ckpt | resumed with EMA from ft-EMA, emphasis on MSE (rec. loss = MSE + 0.1 * LPIPS), smoother outputs |

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

### Visual

|

| 53 |

+

_Visualization of reconstructions on 256x256 images from the COCO2017 validation dataset._

|

| 54 |

+

|

| 55 |

+

<p align="center">

|

| 56 |

+

<br>

|

| 57 |

+

<b>

|

| 58 |

+

256x256: ft-EMA (left), ft-MSE (middle), original (right)</b>

|

| 59 |

+

</p>

|

| 60 |

+

|

| 61 |

+

<p align="center">

|

| 62 |

+

<img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00025_merged.png />

|

| 63 |

+

</p>

|

| 64 |

+

|

| 65 |

+

<p align="center">

|

| 66 |

+

<img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00011_merged.png />

|

| 67 |

+

</p>

|

| 68 |

+

|

| 69 |

+

<p align="center">

|

| 70 |

+

<img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00037_merged.png />

|

| 71 |

+

</p>

|

| 72 |

+

|

| 73 |

+

<p align="center">

|

| 74 |

+

<img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00043_merged.png />

|

| 75 |

+

</p>

|

| 76 |

+

|

| 77 |

+

<p align="center">

|

| 78 |

+

<img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00053_merged.png />

|

| 79 |

+

</p>

|

| 80 |

+

|

| 81 |

+

<p align="center">

|

| 82 |

+

<img src=https://huggingface.co/stabilityai/stable-diffusion-decoder-finetune/resolve/main/eval/ae-decoder-tuning-reconstructions/merged/00029_merged.png />

|

| 83 |

+

</p>

|

vae/sd-vae-ft-mse/config.json

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "AutoencoderKL",

|

| 3 |

+

"_diffusers_version": "0.4.2",

|

| 4 |

+

"act_fn": "silu",

|

| 5 |

+

"block_out_channels": [

|

| 6 |

+

128,

|

| 7 |

+

256,

|

| 8 |

+

512,

|

| 9 |

+

512

|

| 10 |

+

],

|

| 11 |

+

"down_block_types": [

|

| 12 |

+

"DownEncoderBlock2D",

|

| 13 |

+

"DownEncoderBlock2D",

|

| 14 |

+

"DownEncoderBlock2D",

|

| 15 |

+

"DownEncoderBlock2D"

|

| 16 |

+

],

|

| 17 |

+

"in_channels": 3,

|

| 18 |

+

"latent_channels": 4,

|

| 19 |

+

"layers_per_block": 2,

|

| 20 |

+

"norm_num_groups": 32,

|

| 21 |

+

"out_channels": 3,

|

| 22 |

+

"sample_size": 256,

|

| 23 |

+

"up_block_types": [

|

| 24 |

+

"UpDecoderBlock2D",

|

| 25 |

+

"UpDecoderBlock2D",

|

| 26 |

+

"UpDecoderBlock2D",

|

| 27 |

+

"UpDecoderBlock2D"

|

| 28 |

+

]

|

| 29 |

+

}

|

vae/sd-vae-ft-mse/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1b4889b6b1d4ce7ae320a02dedaeff1780ad77d415ea0d744b476155c6377ddc

|

| 3 |

+

size 334707217

|

vae/sd-vae-ft-mse/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a1d993488569e928462932c8c38a0760b874d166399b14414135bd9c42df5815

|

| 3 |

+

size 334643276

|