---

license: apache-2.0

pipeline_tag: image-text-to-text

tags:

- pytorch

- transformers

- mindspore

- mindnlp

- ERNIE4.5

- image-to-text

- ocr

- document-parse

- layout

- table

- formula

- chart

base_model: baidu/ERNIE-4.5-0.3B-Paddle

language:

- en

- zh

- multilingual

---

PaddleOCR-VL: Boosting Multilingual Document Parsing via a 0.9B Ultra-Compact Vision-Language Model

[](https://github.com/mindspore-lab/mindnlp)

[](https://huggingface.co/lvyufeng/PaddleOCR-VL-0.9B)

[](./LICENSE)

**📝 arXiv**: [Technical Report](https://arxiv.org/pdf/2510.14528)

## Introduction

**PaddleOCR-VL** is a SOTA and resource-efficient model tailored for document parsing. Its core component is PaddleOCR-VL-0.9B, a compact yet powerful vision-language model (VLM) that integrates a NaViT-style dynamic resolution visual encoder with the ERNIE-4.5-0.3B language model to enable accurate element recognition. This innovative model efficiently supports 109 languages and excels in recognizing complex elements (e.g., text, tables, formulas, and charts), while maintaining minimal resource consumption. Through comprehensive evaluations on widely used public benchmarks and in-house benchmarks, PaddleOCR-VL achieves SOTA performance in both page-level document parsing and element-level recognition. It significantly outperforms existing solutions, exhibits strong competitiveness against top-tier VLMs, and delivers fast inference speeds. These strengths make it highly suitable for practical deployment in real-world scenarios.

### **Core Features**

1. **Compact yet Powerful VLM Architecture:** We present a novel vision-language model that is specifically designed for resource-efficient inference, achieving outstanding performance in element recognition. By integrating a NaViT-style dynamic high-resolution visual encoder with the lightweight ERNIE-4.5-0.3B language model, we significantly enhance the model’s recognition capabilities and decoding efficiency. This integration maintains high accuracy while reducing computational demands, making it well-suited for efficient and practical document processing applications.

2. **SOTA Performance on Document Parsing:** PaddleOCR-VL achieves state-of-the-art performance in both page-level document parsing and element-level recognition. It significantly outperforms existing pipeline-based solutions and exhibiting strong competitiveness against leading vision-language models (VLMs) in document parsing. Moreover, it excels in recognizing complex document elements, such as text, tables, formulas, and charts, making it suitable for a wide range of challenging content types, including handwritten text and historical documents. This makes it highly versatile and suitable for a wide range of document types and scenarios.

3. **Multilingual Support:** PaddleOCR-VL Supports 109 languages, covering major global languages, including but not limited to Chinese, English, Japanese, Latin, and Korean, as well as languages with different scripts and structures, such as Russian (Cyrillic script), Arabic, Hindi (Devanagari script), and Thai. This broad language coverage substantially enhances the applicability of our system to multilingual and globalized document processing scenarios.

### **Model Architecture**

## News

* ```2025.10.19``` 🚀 MindNLP support [PaddleOCR-VL](https://github.com/PaddlePaddle/PaddleOCR), — a multilingual documents parsing via a 0.9B Ultra-Compact Vision-Language Model with SOTA performance.

## MindSpore Usage

### Install Dependencies

Install [MindNLP](https://github.com/mindspore-lab/mindnlp)

```bash

pip install mindspore==2.7.0

pip install mindnlp==0.5.0rc3

```

### Basic Usage

```python

import mindspore

import mindnlp

from transformers import AutoModel, AutoProcessor, AutoTokenizer

from transformers.image_utils import load_image

model = AutoModel.from_pretrained("lvyufeng/PaddleOCR-VL-0.9B", trust_remote_code=True, dtype=mindspore.float16, device_map='auto')

tokenizer = AutoTokenizer.from_pretrained("lvyufeng/PaddleOCR-VL-0.9B")

processor = AutoProcessor.from_pretrained("lvyufeng/PaddleOCR-VL-0.9B", trust_remote_code=True)

image = load_image(

"https://hf-mirror.com/datasets/hf-internal-testing/fixtures_got_ocr/resolve/main/image_ocr.jpg"

)

query = 'OCR:'

messages = [

{

"role": "user",

"content": query,

}

]

text = tokenizer.apply_chat_template(messages, tokenize=False)

inputs = processor(image, text=text, return_tensors="pt", format=True).to('cuda')

generate_ids = model.generate(**inputs, do_sample=False, num_beams=1, max_new_tokens=1024)

print(generate_ids.shape)

decoded_output = processor.decode(

generate_ids[0], skip_special_tokens=True

)

print(decoded_output)

```

### Prompts

Besides OCR, PaddleOCR-VL also supports various tasks, including: table recognition, chart recognition and formula recognition.

You can replace the prompt with the following usages:

```python

query = "OCR:"

query = "Table Recognition:"

query = "Chart Recognition:"

query = "Formula Recognition:"

```

## Pytorch Usage

You can also use use PaddleOCR-VL with Pytorch.

### Install Dependencies

```bash

pip install torch

pip install transformers==4.57.1

```

### Basic Usage

```python

import torch

from transformers import AutoModel, AutoProcessor, AutoTokenizer

from transformers.image_utils import load_image

model = AutoModel.from_pretrained("lvyufeng/PaddleOCR-VL-0.9B", trust_remote_code=True, dtype=torch.bfloat16, device_map='auto')

tokenizer = AutoTokenizer.from_pretrained("lvyufeng/PaddleOCR-VL-0.9B")

processor = AutoProcessor.from_pretrained("lvyufeng/PaddleOCR-VL-0.9B", trust_remote_code=True)

image = load_image(

"https://hf-mirror.com/datasets/hf-internal-testing/fixtures_got_ocr/resolve/main/image_ocr.jpg"

)

query = 'OCR:'

messages = [

{

"role": "user",

"content": query,

}

]

text = tokenizer.apply_chat_template(messages, tokenize=False)

inputs = processor(image, text=text, return_tensors="pt", format=True).to('cuda')

generate_ids = model.generate(**inputs, do_sample=False, num_beams=1, max_new_tokens=1024)

print(generate_ids.shape)

decoded_output = processor.decode(

generate_ids[0], skip_special_tokens=True

)

print(decoded_output)

```

## Performance

### Page-Level Document Parsing

#### 1. OmniDocBench v1.5

##### PaddleOCR-VL achieves SOTA performance for overall, text, formula, tables and reading order on OmniDocBench v1.5

#### 2. OmniDocBench v1.0

##### PaddleOCR-VL achieves SOTA performance for almost all metrics of overall, text, formula, tables and reading order on OmniDocBench v1.0

> **Notes:**

> - The metrics are from [MinerU](https://github.com/opendatalab/MinerU), [OmniDocBench](https://github.com/opendatalab/OmniDocBench), and our own internal evaluations.

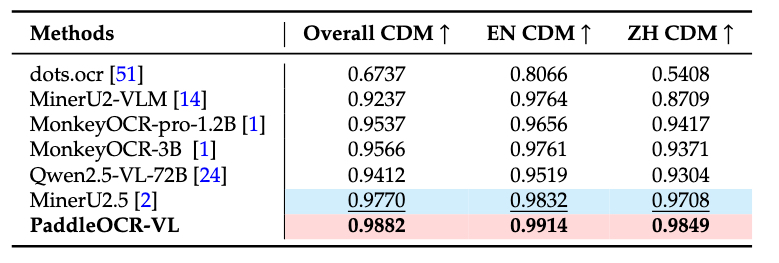

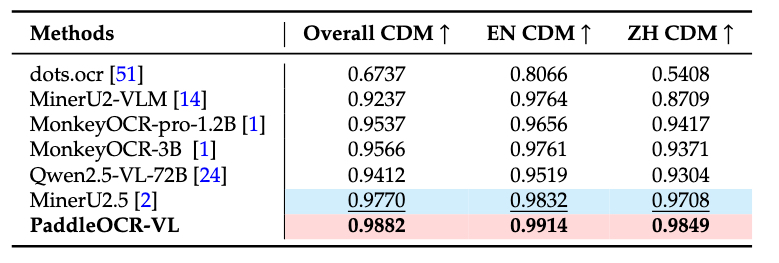

### Element-level Recognition

#### 1. Text

**Comparison of OmniDocBench-OCR-block Performance**

PaddleOCR-VL’s robust and versatile capability in handling diverse document types, establishing it as the leading method in the OmniDocBench-OCR-block performance evaluation.

**Comparison of In-house-OCR Performance**

In-house-OCR provides a evaluation of performance across multiple languages and text types. Our model demonstrates outstanding accuracy with the lowest edit distances in all evaluated scripts.

#### 2. Table

**Comparison of In-house-Table Performance**

Our self-built evaluation set contains diverse types of table images, such as Chinese, English, mixed Chinese-English, and tables with various characteristics like full, partial, or no borders, book/manual formats, lists, academic papers, merged cells, as well as low-quality, watermarked, etc. PaddleOCR-VL achieves remarkable performance across all categories.

#### 3. Formula

**Comparison of In-house-Formula Performance**

In-house-Formula evaluation set contains simple prints, complex prints, camera scans, and handwritten formulas. PaddleOCR-VL demonstrates the best performance in every category.

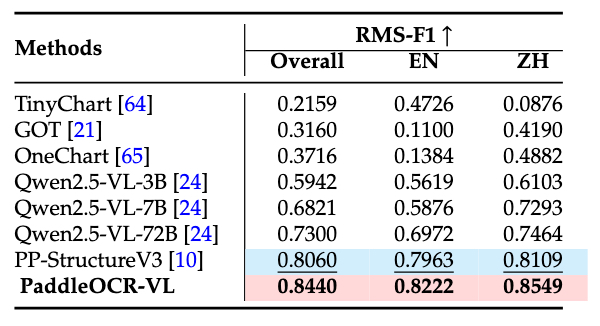

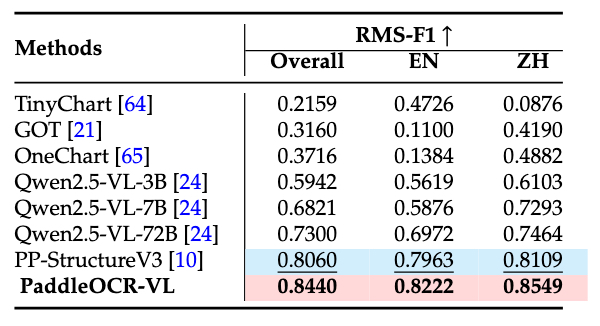

#### 4. Chart

**Comparison of In-house-Chart Performance**

The evaluation set is broadly categorized into 11 chart categories, including bar-line hybrid, pie, 100% stacked bar, area, bar, bubble, histogram, line, scatterplot, stacked area, and stacked bar. PaddleOCR-VL not only outperforms expert OCR VLMs but also surpasses some 72B-level multimodal language models.

## Visualization

### Comprehensive Document Parsing

### Text

### Table

### Formula

### Chart

## Acknowledgments

We would like to thank [ERNIE](https://github.com/PaddlePaddle/ERNIE), [Keye](https://github.com/Kwai-Keye/Keye), [MinerU](https://github.com/opendatalab/MinerU), [OmniDocBench](https://github.com/opendatalab/OmniDocBench) for providing valuable code, model weights and benchmarks. We also appreciate everyone's contribution to this open-source project!

## Citation

If you find PaddleOCR-VL helpful, feel free to give us a star and citation.

```bibtex

@misc{paddleocrvl2025technicalreport,

title={PaddleOCR-VL: Boosting Multilingual Document Parsing via a 0.9B Ultra-Compact Vision-Language Model},

author={Cui, C. et al.},

year={2025},

primaryClass={cs.CL},

howpublished={\url{https://ernie.baidu.com/blog/publication/PaddleOCR-VL_Technical_Report.pdf}}

}

```